(2) /home/user/miniconda/envs/xgboost-1.0.2-cuda-10.1/lib/libxgboost.so(xgboost::gbm::GBTree::Configure(std::vector, std::allocator >, std::_cxx11::basic_string, std::allocator >, std::allocator, std::allocator >, std::_cxx11::basic_string, std::allocator > const&)+0x27f) : /home/conda/feedstock_root/build_artifacts/xgboost_1584539733809/work/include/xgboost/gbm.h:166: XGBoost version not compiled with GPU support.

Raise XGBoostError(py_str(_LIB.XGBGetLastError())) _check_call(_LIB.XGBoosterUpdateOneIter(self.handle,įile "/home/user/miniconda/envs/xgboost-1.0.2-cuda-10.1/lib/python3.8/site-packages/xgboost/core.py", line 189, in _check_call

CONDA INSTALL XGBOOST WINDOWS UPDATE

Xgb.train(params, dtrain, evals=)įile "/home/user/miniconda/envs/xgboost-1.0.2-cuda-10.1/lib/python3.8/site-packages/xgboost/training.py", line 205, in trainįile "/home/user/miniconda/envs/xgboost-1.0.2-cuda-10.1/lib/python3.8/site-packages/xgboost/training.py", line 74, in _train_internalįile "/home/user/miniconda/envs/xgboost-1.0.2-cuda-10.1/lib/python3.8/site-packages/xgboost/core.py", line 1247, in update And how I can get the parameters info displayed above the chart.Īs of now, I am getting the chart and not the red box and info within it.File "/home/zeth/nextflow_projects/nextflow_machine_learning/bin/train_boston_xgboost.py", line 10, in I was wondering whether it is due to specific implementation, I build and installed in windows. Predictors = ]Īlthough the feature importance chart is displayed, but the parameters info in red box at the top of chart is missing:Ĭonsulted people who use linux/mac OS and got xgboost installed. Now, when the function is called to get the optimum parameters: #Choose all predictors except target & IDcols

Print "AUC Score (Train): %f" % metrics.roc_auc_score(dtrain, dtrain_predprob)įeat_imp = pd.Series(alg.booster().get_fscore()).sort_values(ascending=False)įeat_imp.plot(kind='bar', title='Feature Importances') Print "Accuracy : %.4g" % metrics.accuracy_score(dtrain.values, dtrain_predictions) Metrics='auc', early_stopping_rounds=early_stopping_rounds, show_progress=False)Īlg.set_params(n_estimators=cvresult.shape)Īlg.fit(dtrain, dtrain,eval_metric='auc')ĭtrain_predictions = alg.predict(dtrain)ĭtrain_predprob = alg.predict_proba(dtrain)

Xgtrain = xgb.DMatrix(dtrain.values, label=dtrain.values)Ĭvresult = xgb.cv(xgb_param, xgtrain, num_boost_round=alg.get_params(), nfold=cv_folds, def modelfit(alg, dtrain, predictors,useTrainCV=True, cv_folds=5, early_stopping_rounds=50): However, I tried with the following function code, to get cv parameters tuned: #Import libraries:įrom xgboost.sklearn import XGBClassifierįrom sklearn import cross_validation, metrics #Additional sklearn functionsįrom id_search import GridSearchCV #Perforing grid searchĪ function is created to get the optimum parameters and display the output in visual form.

CONDA INSTALL XGBOOST WINDOWS WINDOWS

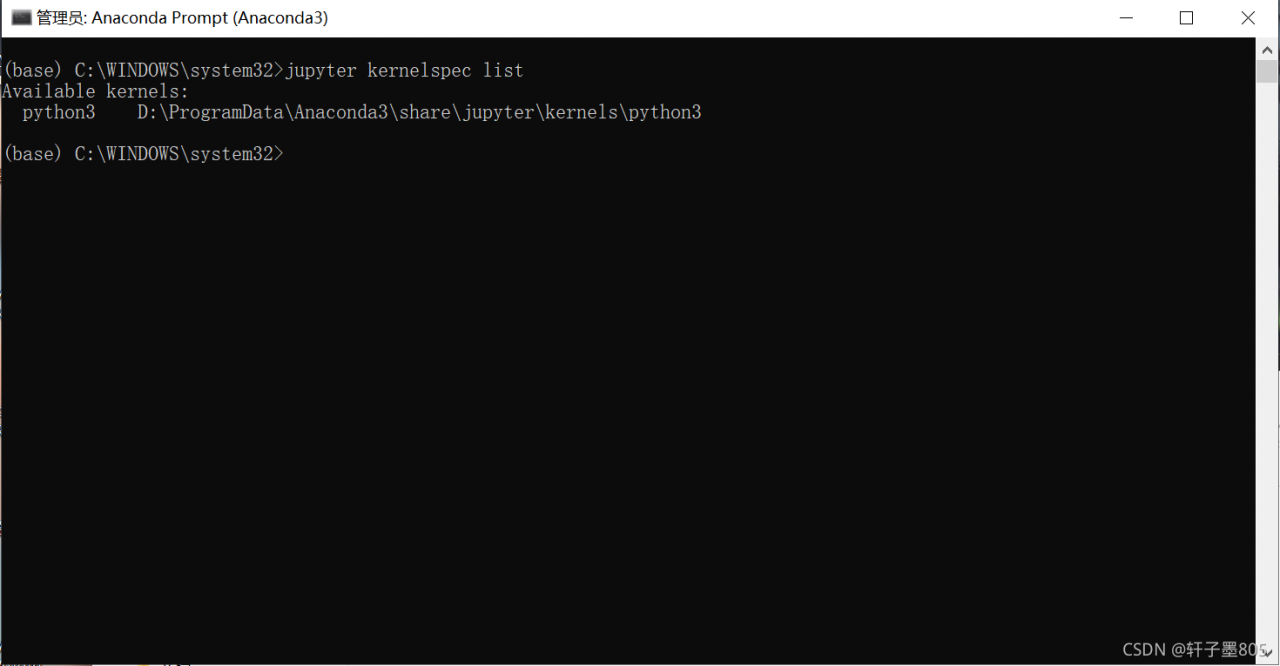

I have installed xgboost in windows os following the above resources, which is not available till now in pip.